- Jobs

- Tools

ResumeCreate your job-winning resume using our free resume builder.

ResumeCreate your job-winning resume using our free resume builder. PortfolioShowcase your skills and projects with a professional portfolio.

PortfolioShowcase your skills and projects with a professional portfolio. ResumeCreate your job-winning resume using our free resume builder.Resume BuilderMake a resume for free.Resume TemplatesAccess our extensive library of professional & ready-to-use templates.Resume ExamplesGet inspired by real resume examples to create your own.Occupation GuideAccess resume writing guides tailored for different professions.Resume HelpGet expert advice on all things resume from our team of recruitment specialists.

ResumeCreate your job-winning resume using our free resume builder.Resume BuilderMake a resume for free.Resume TemplatesAccess our extensive library of professional & ready-to-use templates.Resume ExamplesGet inspired by real resume examples to create your own.Occupation GuideAccess resume writing guides tailored for different professions.Resume HelpGet expert advice on all things resume from our team of recruitment specialists. - ResourcesSuccess StoriesBusiness ExcellenceAbout CakeResumeFeatured Reads

- Hire

- Pricing

- Build your Network

- Download our App

The Resume to Land Your Dream Job

Make an impressive resume in 15 minutes. Download PDF for free.

Build My Resume Now

About

Top Searches

IT

Design

Management / Business

Marketing / Advertising

Bio, Medical

Education

Engineering

Media / Communication

Finance

Catering / Food & Beverage

Construction

Customer Service

HR

Logistics / Trade

Manufacturing

Other

Public Social Work

Recommended Articles

Resume Examples & Writing Guide

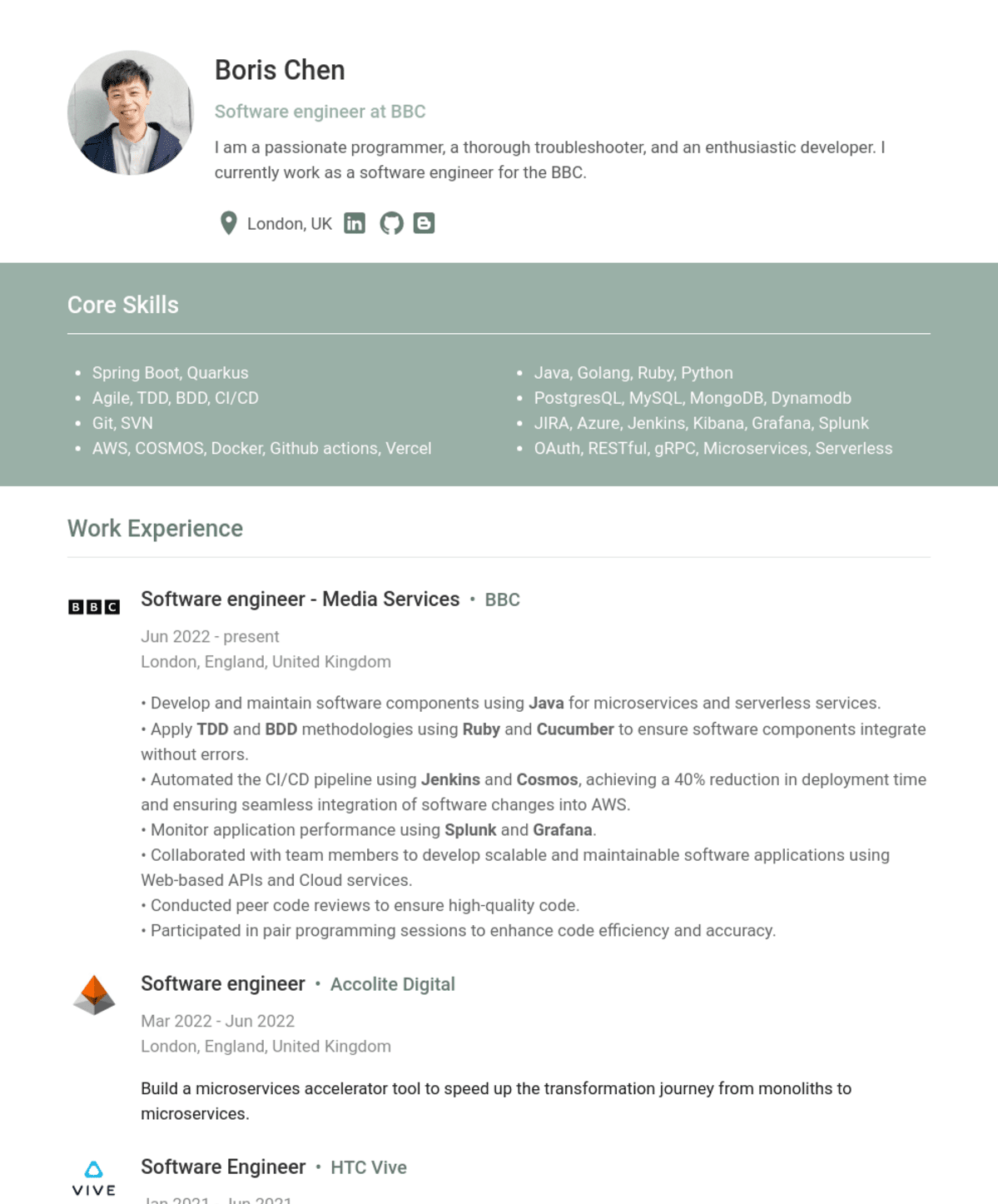

JavaSpring BootAWSPythonRubyGolangMongoDBPostgreSQLMySQLDynamoDBDatabaseGitSVNJunitTDD (Test-driven development)BDD - CucumberData Structures and AlgorithmsSoftware engineeringSQLNoSQLRESTfulAPILinuxAgileDockerJenkinsCOSMOSAzureVercelGithub actionsCICDJiraSwaggerKibanaMicroservicesHerokuMavenOAuth2gRPCOpenCVTeseract OCRQuarkusSpring FrameworkSQSGrafanaServerless

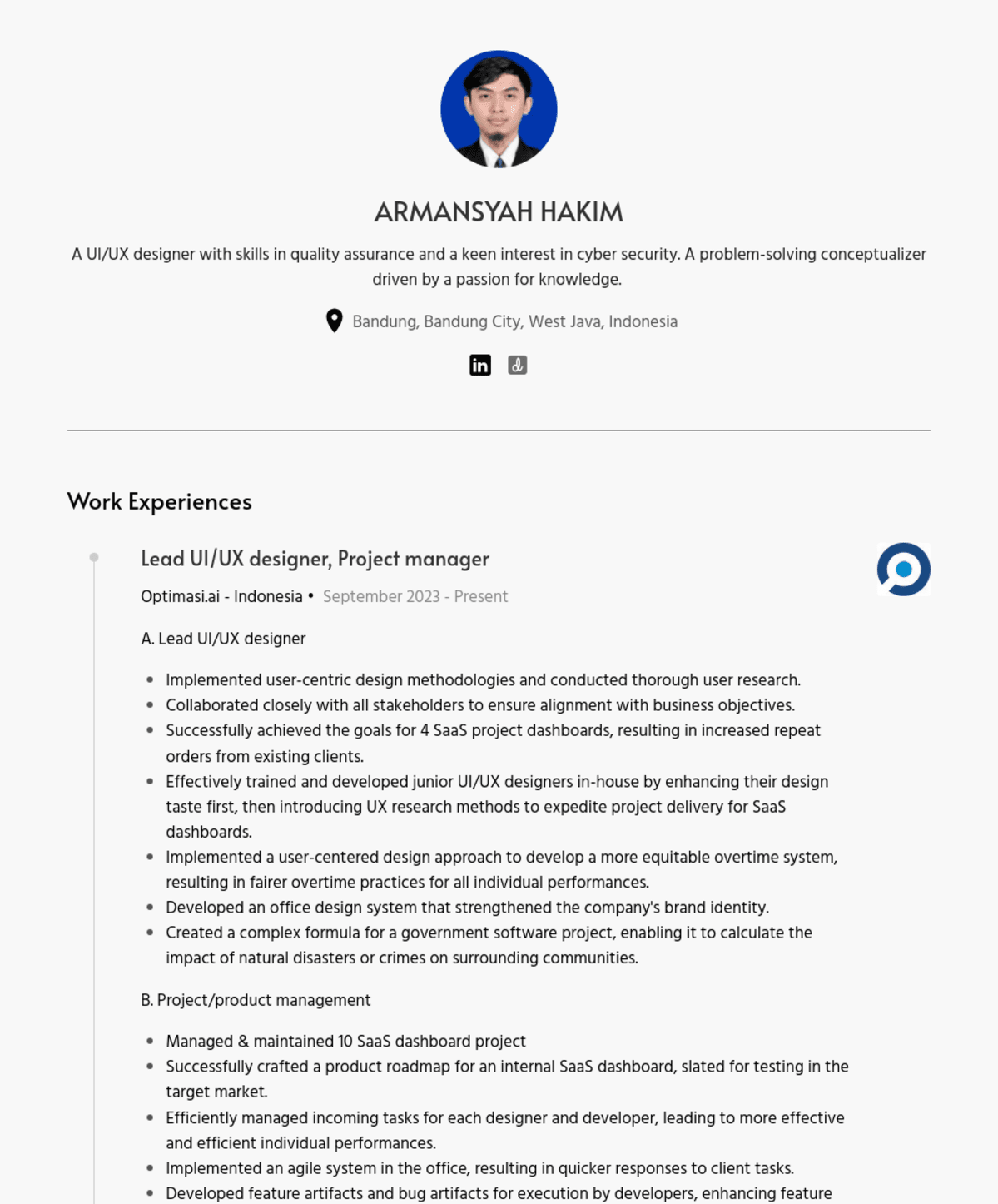

FigmaUI/UXDesignUX ResearchUI DesignerProduct Ownerscrum masterAdobe PhotoshopAdobe XDData AnalysisData VisualizationExcelWireframing and PrototypingPhotoshopWeb DesignFront-end DevelopmentSelenium WebDriver AutomationSoftware Quality Assurancesoftware quality engineermanual testingScrum Methodology

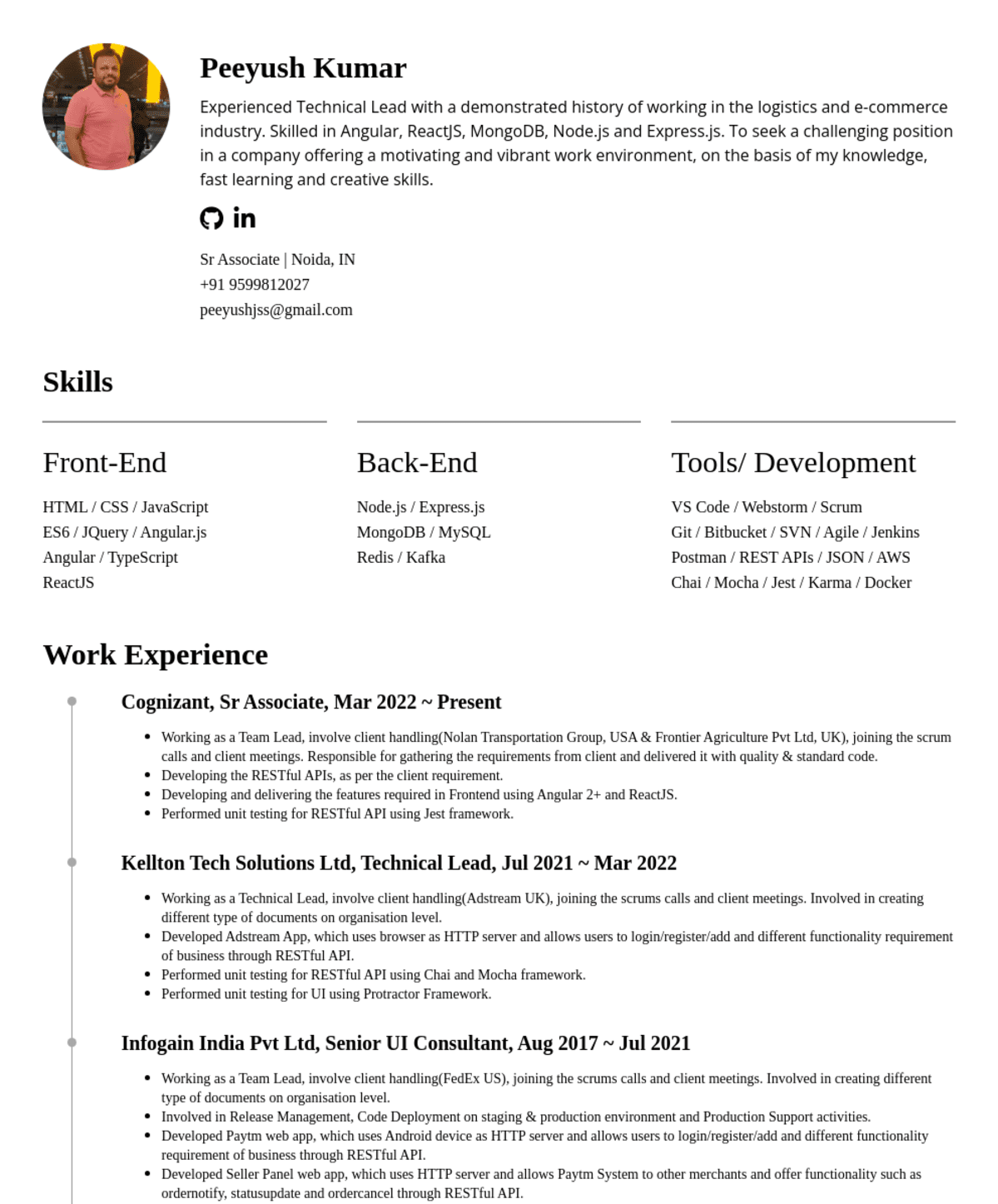

JavaSpringSpring-BootHibernateKafkaAndroidObjective-CiOSPythonJSNodeJSExpressJSRequestJSSequelizeJSAjvJSReactJSReduxJSSagaJSAxiosJSJavaFXJPARabbitMQHTMLCSSXMLUMLXSDJSONSOAPSIPRTPRTMPRTSPWebSocketDevOps / CI / CDAnsibleRancherJenkinsPrometheusGrafanaDockerDocker SwarmDocker MachineEuropaRedmineKubernetesVaultHelmGitlabMySqlPostgresMongoRedisElasticSearchWindowsLinuxMacOSNetBeans IDEEclipseIntelliJ IDEASoftware DevelopmentSoftware DesignDatabase DesignBusiness Logic DesignSoftware EngineeringSoftware ArchitectTeam-LeadTech-LeadCTOSo-Called RESTfulRPC

Resume

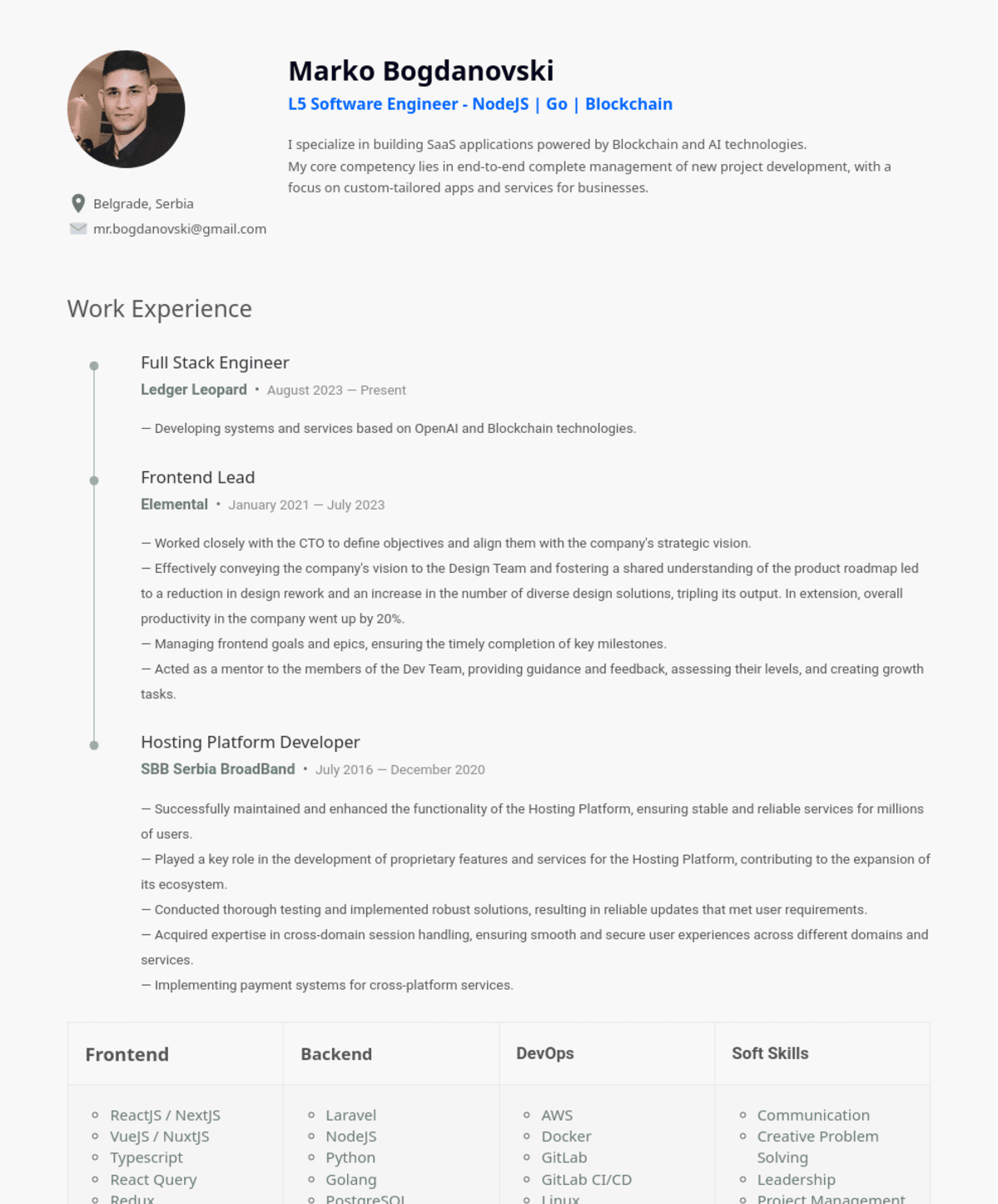

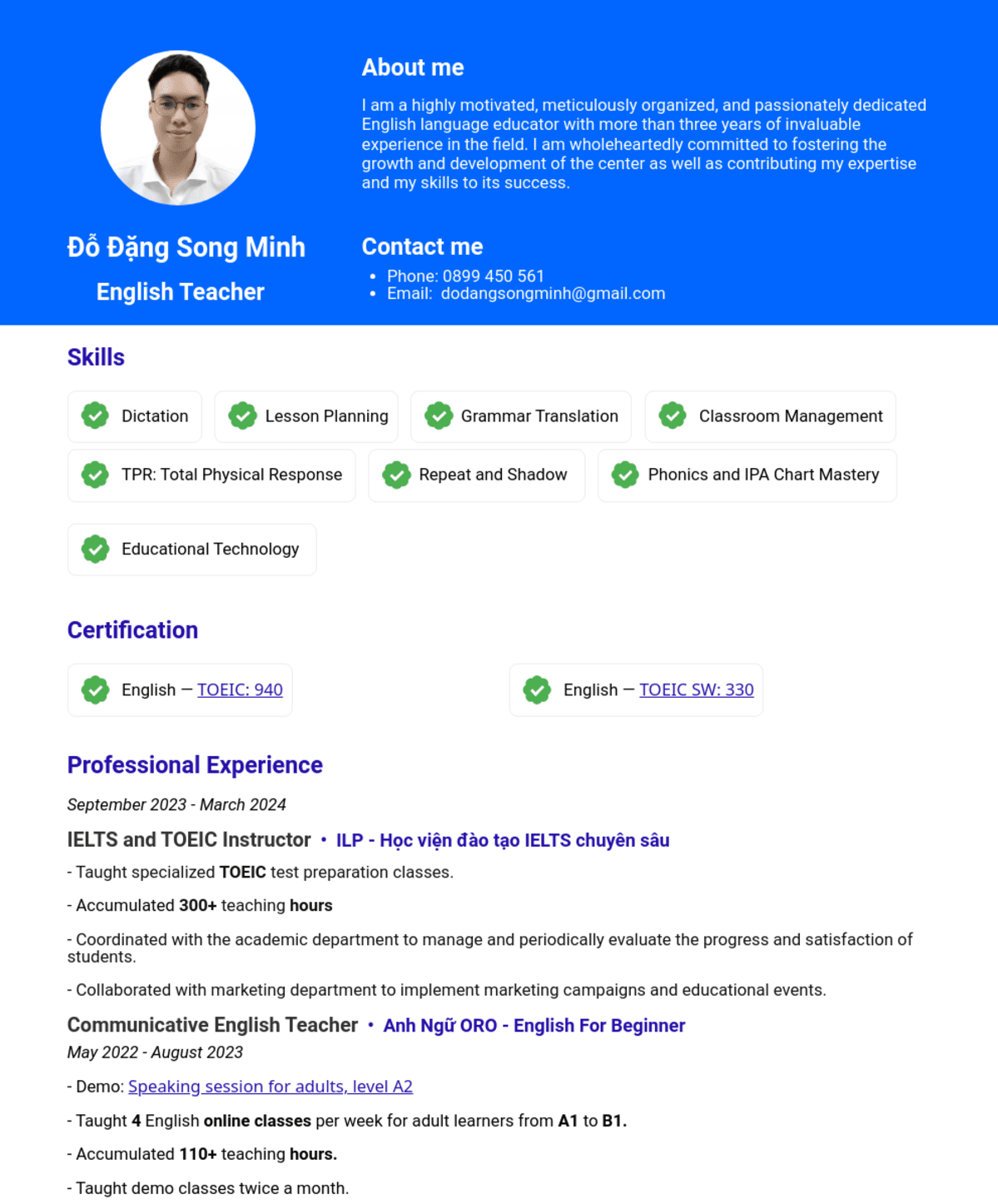

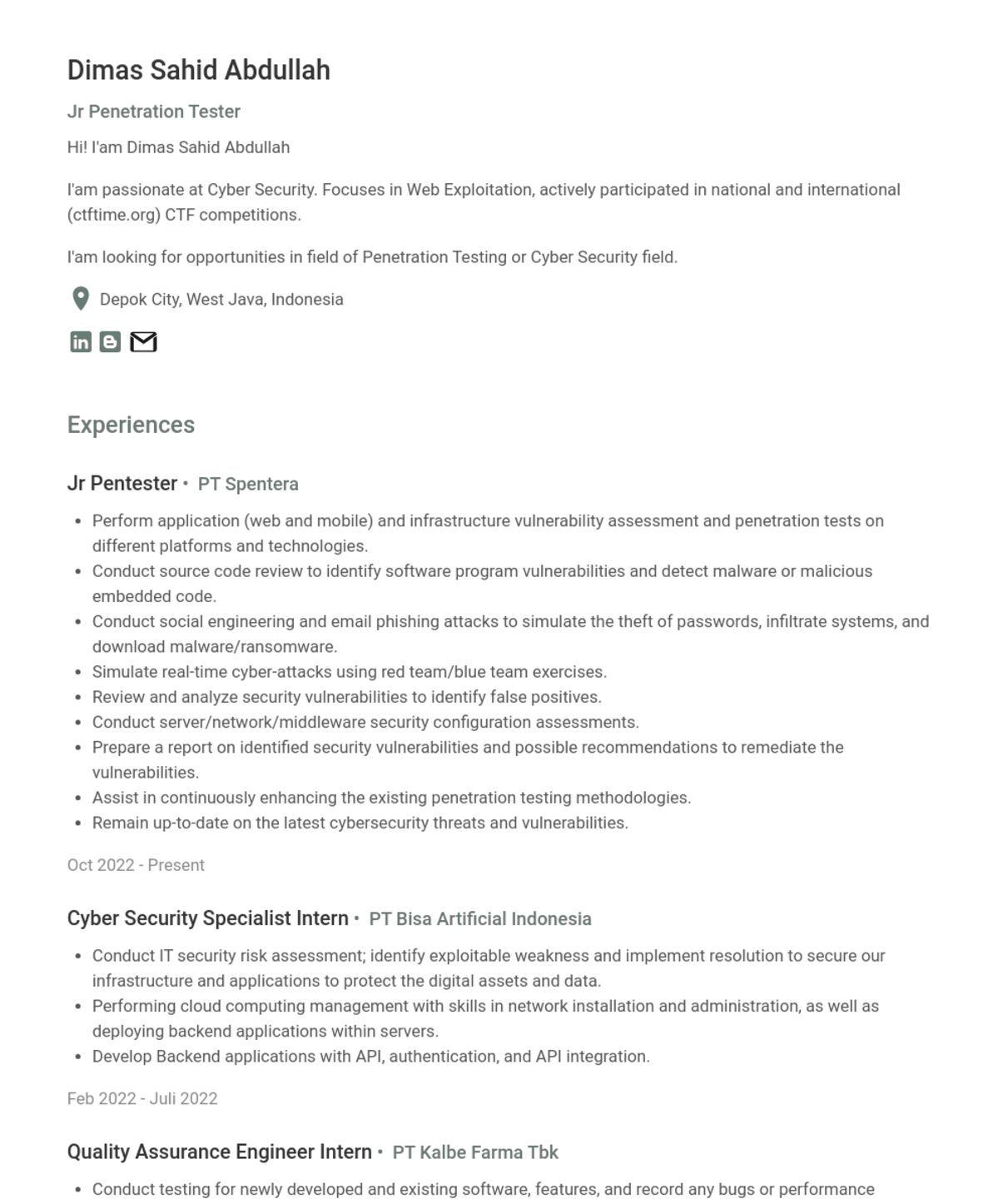

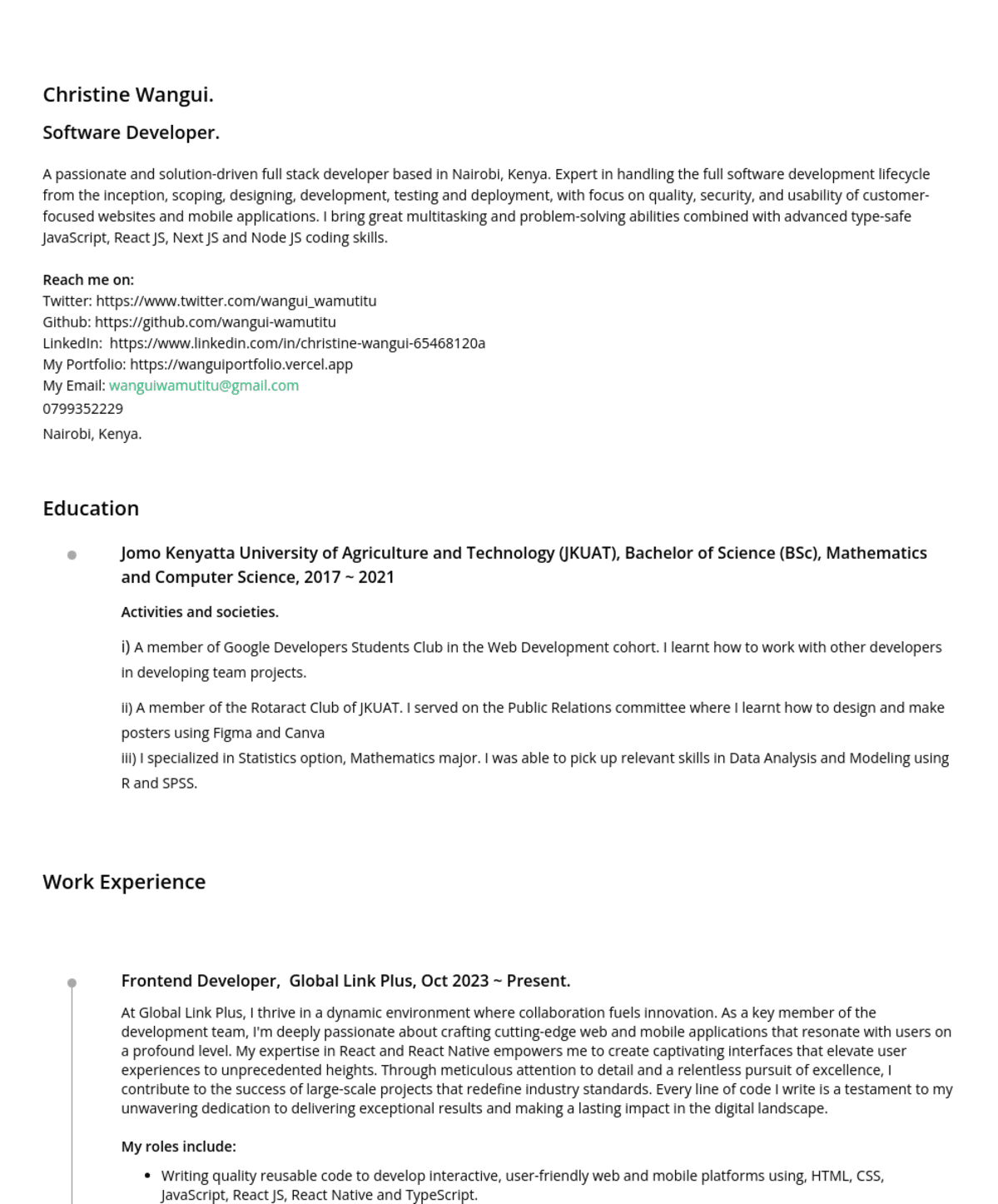

Profile